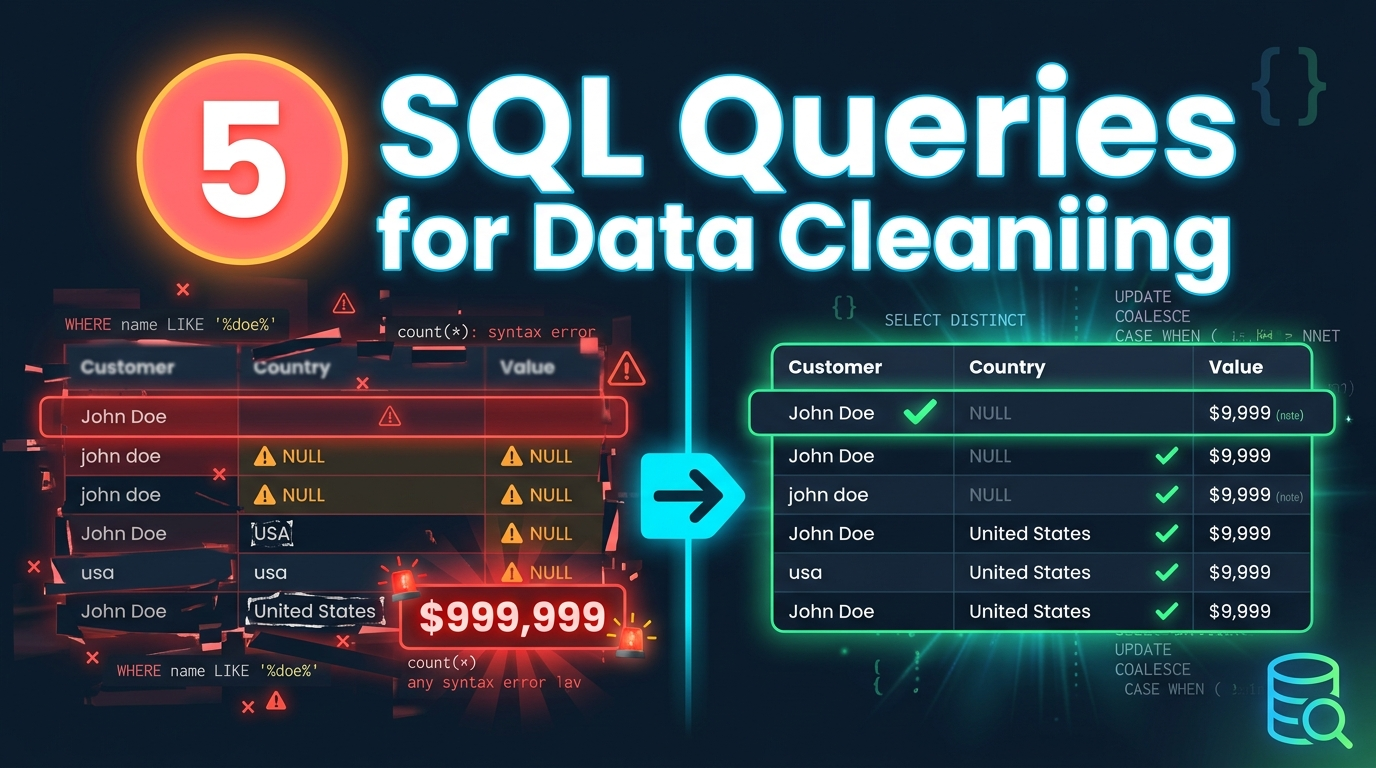

If you’ve ever inherited a dataset which includes duplicate records, missing values, inconsistent formatting, you know that data cleaning isn’t optional. It’s the foundation of every analysis you’ll ever do. Today, I’m showing you five SQL queries that will handle 80% of your data cleaning challenges. These aren’t theoretical examples. These are the exact patterns I use every single week in production environments.

SECTION 1: FINDING AND REMOVING DUPLICATES

“The first challenge we’re tackling is duplicates. And here’s the thing — duplicates aren’t always exact copies. Sometimes you’ve got the same customer entered twice with slightly different email addresses, or the same transaction logged multiple times.

Let me show you the most reliable way to handle this.

WITH ranked_records AS (

SELECT

*,

ROW_NUMBER() OVER (

PARTITION BY customer_id, email

ORDER BY created_date DESC

) AS row_num

FROM customers

)

SELECT *

FROM ranked_records

WHERE row_num = 1;Here’s what’s happening. We’re using a Common Table Expression that’s the WITH clause to create a temporary result set. Inside it, we’re using ROW_NUMBER with PARTITION BY.

PARTITION BY is doing the heavy lifting here. It’s grouping records by customer_id and email, then numbering them. The ORDER BY created_date DESC means we keep the most recent record.

When we filter for row_num = 1, we get exactly one record per unique combination. Before: 1,247 records. After: 1,089 records. We just eliminated 158 duplicates with complete confidence that we kept the right ones.

If you want to delete these duplicates permanently, you’d wrap this in a DELETE statement but always, always test with SELECT first.

SECTION 2: HANDLING MISSING VALUES INTELLIGENTLY

Missing data is inevitable. The question is: what do you do about it?

First, you need to understand WHERE your nulls are and HOW MANY you have.

SELECT

COUNT(*) AS total_records,

COUNT(customer_name) AS records_with_name,

COUNT(*) - COUNT(customer_name) AS missing_names,

ROUND(100.0 * (COUNT(*) - COUNT(customer_name)) / COUNT(*), 2) AS pct_missing_names,

COUNT(email) AS records_with_email,

COUNT(*) - COUNT(email) AS missing_emails,

ROUND(100.0 * (COUNT(*) - COUNT(email)) / COUNT(*), 2) AS pct_missing_emails

FROM customers;This gives you a completeness report. You can see that 15% of records are missing email addresses. That’s important context before you decide how to handle them. Now, here’s a pattern for filling in missing values using COALESCE.

SELECT

customer_id,

COALESCE(phone_primary, phone_secondary, 'No phone available') AS contact_phone,

COALESCE(city, 'Unknown') AS city,

COALESCE(purchase_amount, 0) AS purchase_amount

FROM customers;COALESCE takes the first non-null value in the list. So if phone_primary is null, it checks phone_secondary. If that’s also null, it uses our fallback text. Be careful with this on numeric columns. Replacing nulls with zero can skew your averages. Sometimes the right move is to exclude nulls entirely, not replace them.

SELECT AVG(purchase_amount)

FROM customers

WHERE purchase_amount IS NOT NULL;The key is understanding what the null represents. Is it genuinely zero, or is it missing information? That determines your strategy.

SECTION 3: STANDARDIZING INCONSISTENT TEXT DATA

Inconsistent text formatting will destroy your groupings and aggregations. Look at this country column. Same country, four different values. Here’s how you fix it.

SELECT

customer_id,

UPPER(TRIM(country)) AS country_clean,

CASE

WHEN UPPER(TRIM(country)) IN ('USA', 'UNITED STATES', 'U.S.A.', 'US')

THEN 'USA'

WHEN UPPER(TRIM(country)) IN ('UK', 'UNITED KINGDOM', 'GREAT BRITAIN')

THEN 'UK'

WHEN UPPER(TRIM(country)) IN ('UAE', 'UNITED ARAB EMIRATES')

THEN 'UAE'

ELSE UPPER(TRIM(country))

END AS country_standardized

FROM customers;We’re combining three functions here. TRIM removes leading and trailing spaces. UPPER converts everything to uppercase for consistency. Then the CASE statement maps variations to a single standard value. Now your country grouping actually works. This same pattern applies to product names, categories, status fields anywhere you’ve got human data entry, you’ll need this.

SECTION 4: IDENTIFYING AND REMOVING OUTLIERS

Outliers can completely distort your analysis. A single typo someone enters 100,000 instead of 100 and your average is useless. Here’s how to identify them using statistical boundaries.

WITH stats AS (

SELECT

AVG(purchase_amount) AS mean_amount,

STDEV(purchase_amount) AS stddev_amount

FROM transactions

),

flagged_records AS (

SELECT

t.*,

s.mean_amount,

s.stddev_amount,

CASE

WHEN ABS(t.purchase_amount - s.mean_amount) > 3 * s.stddev_amount

THEN 'Outlier'

ELSE 'Normal'

END AS outlier_flag

FROM transactions t

CROSS JOIN stats s

)

SELECT *

FROM flagged_records

WHERE outlier_flag = 'Outlier';This uses the three standard deviations rule. In a normal distribution, 99.7% of data falls within three standard deviations of the mean. Anything beyond that is statistically unusual. We’ve identified all outliers. Now, here’s the important part don’t automatically delete them. Investigate first.

SELECT

transaction_id,

customer_id,

purchase_amount,

purchase_date,

payment_method

FROM flagged_records

WHERE outlier_flag = 'Outlier'

ORDER BY purchase_amount DESC;Sometimes outliers are legitimate a bulk order, a corporate purchase. Sometimes they’re errors. You need domain knowledge to decide. For ongoing data pipelines, you can use this pattern to automatically flag suspicious records for manual review before they enter your reporting layer.

SECTION 5: VALIDATING DATA AGAINST BUSINESS RULES

The last essential query is validation. You need to check that your data actually makes sense according to your business rules. Here’s a comprehensive validation query that checks multiple conditions at once.

SELECT

order_id,

customer_id,

order_date,

ship_date,

quantity,

unit_price,

total_amount,

CASE WHEN order_date > GETDATE() THEN 'Future order date' END AS date_error,

CASE WHEN ship_date < order_date THEN 'Ship before order' END AS logic_error,

CASE WHEN quantity <= 0 THEN 'Invalid quantity' END AS quantity_error,

CASE WHEN unit_price <= 0 THEN 'Invalid price' END AS price_error,

CASE WHEN ABS(quantity * unit_price - total_amount) > 0.01 THEN 'Calculation mismatch' END AS calc_error,

CASE WHEN email NOT LIKE '%_@__%.__%' THEN 'Invalid email format' END AS email_error

FROM orders

WHERE

order_date > GETDATE()

OR ship_date < order_date

OR quantity <= 0

OR unit_price <= 0

OR ABS(quantity * unit_price - total_amount) > 0.01

OR email NOT LIKE '%_@__%.__%';This query checks six different business rules:

- No future order dates

- Ship date can’t be before order date

- Quantity must be positive

- Price must be positive

- Total amount should equal quantity times unit price

- Email must have basic valid format

Every row returned is a violation. You get the specific error in its own column, so you can filter and fix by error type.

SELECT

COUNT(CASE WHEN order_date > GETDATE() THEN 1 END) AS future_dates,

COUNT(CASE WHEN ship_date < order_date THEN 1 END) AS logic_errors,

COUNT(CASE WHEN quantity <= 0 THEN 1 END) AS quantity_errors,

COUNT(CASE WHEN unit_price <= 0 THEN 1 END) AS price_errors,

COUNT(CASE WHEN ABS(quantity * unit_price - total_amount) > 0.01 THEN 1 END) AS calc_errors

FROM orders;This gives you a dashboard view of data quality. You can track these metrics over time to see if your data quality is improving or degrading.

Conclusion

Let’s recap. These five query patterns will handle the majority of your data cleaning work:

- ROW_NUMBER with PARTITION BY for intelligent duplicate removal

- COALESCE and NULL counting for handling missing values

- CASE statements with TRIM and UPPER for standardizing text

- Statistical outlier detection using standard deviations

- Multi-condition validation to enforce business rules

The key to effective data cleaning isn’t knowing exotic functions. It’s having reliable patterns you can adapt to any dataset.